Slides from my talk at the OARN 2012 conference on augmented reality.

This is the connected world, a journal by Gene Becker of Lightning Laboratories focusing on the confluence of ubiquitous computing, augmented reality, social media & the internet of things.

Slides from my talk at the OARN 2012 conference on augmented reality.

Last week at Where 2.0 and Wherecamp, the air was full of AR augments. Between the locative photos in the Instagram layer, the geotagged tweets in TweepsAround, and the art/protest layer called freespace, there were many highly visual, contextually interesting AR objects being generated, occupying and flowing through the event spaces. These were invisible of course, until viewed through the AR lens. I found myself becoming very aware of this hidden dimension, wondering what new objects might have appeared, what I might encounter if I peered through the looking glass right here, right now. And then I found myself taking pictures in AR, because I was discovering moments that seemed worth capturing and sharing.

Larry and Mark weren’t physically at Where 2.0, but their perceived presence loomed large over the proceedings. Those are clever mashups on the Obey Giant theme as well; what are they trying to say here?

At Wherecamp on the Stanford campus, locative social media were very much in evidence. Here, camp organizer @anselm and AR developer @pmark were spotted in physical/digital space.

The freespace cabal apparently thought the geo community would be receptive to their work, although it seemed some of the messages were aimed at a different audience. The detention of Chinese artist Ai Wei Wei is a charged topic, certainly.

So you’ll note that although these are all screenshots from the AR view in Layar, I’m referring to them as photographs in their own right. It’s a subtle shift, but an interesting one. For me, this new perspective is driven by several factors: the emergence of visually interesting and contextually relevant AR content, the idea that AR objects are vectors for targeted messages, and the new screenshot and share functions which make Layar seem more like a social digital camera app. I’m finding myself actively composing AR photos, and thinking about what content I could create that would make good AR pictures other people would want to take. Oh, and that awkward AR holding-your-phone-up gesture? I’m taking pictures, what could be more natural?

AR photography feels like it might be important. What do you think?

[cross-posted from the Layar blog]

In my recent Ignite talk Hijacking the Here and Now: Adventures in Augmented Reality, I showed examples of how creative people are using AR in ways that modify our perceptions about time and space. Now, Ignite talks are only 5 minutes long and I think this is a big idea that’s worth a deeper look. So here’s my claim: I assert that one of the most natural and important uses of AR as a creative medium is hacking space and time to explore and make sense of the emerging physical+digital world.

When you look at who the true AR enthusiasts are, who is doing the cutting edge creative work in AR today, it’s artists, activists and digital humanities geeks. Their projects explore and challenge the ideas of ownership and exclusivity of physical space, and the flowing irreversibility of time. They are starting to see AR as the emergence of a new construction of reality, where the physical and digital are no longer distinct but instead are irreversibly blended. Artist Sander Veenhof is attracted to the “infinite dimensions” of AR. Stanford Knight Fellow Adriano Farano sees AR ushering in an era of “multi-layer journalism”. Archivist Rick Prelinger says “History should be like air,” immersive, omnipresent and free. And in their recent paper Augmented Reality and the Museum Experience, Schavemaker et al write:

In the 21st century the media are going ambient. TV, as Anna McCarthy pointed out in Ambient Television (2001), started this great escape from domesticity via the manifold urban screens and the endless flat screens in shops and public transportation. Currently the Internet is going through a similar phase as GPS technology and our mobile devices offer via the digital highway a move from the purely virtual domain to the ‘real’ world. We can collect our data everywhere we desire, and thus at any given moment transform the world around us into a sort of media hybrid, or ‘augmented reality’. [emphasis mine]

When the team behind PhillyHistory.org augments the city of Philadelphia with nearly 90,000 historical photographs in AR, they are actively modifying our experience of the city’s space and connecting us to moments in time long past. With its ambitious scope and scale, this seems a particularly apt example of transforming the world into a media hybrid.

In their AR piece US/Iraq War Memorial, artists Mark Skwarek and John Craig Freeman transpose the locative datascape of casualties in the Iraq War from Wikileaks onto the northeastern United States, with the location of Baghdad mapped onto the coordinates of Washington DC. In addition to spatial hackery evocative of Situationist psychogeographic play, this work makes a strong political statement about control of information, nationalist perspectives and the cultural abstraction of war.

Now let’s talk about this word, ‘hacking’. Actually, you’ll note that I used the term ‘hijacking’ as well, so let’s include that too. My intent is to evoke the tension of multiple meanings: Hacking in the sense of gaining deep understanding and mastery of a system in order to modify and improve it, and as a visible demonstration of a high degree of proficiency. Also, hacking in the sense of making unauthorized intrusions into a system, including both white hat and black hat variations. I use ‘hijacking’ in the sense of a mock takeover, like the Black Eyed Peas playfully hijacking the myspace.com website for publicity purposes, but also hijacking as an antagonistic, possibly malign, and potentially unlawful attack. In the physical+digital augmented world, I expect we will see a wide variety of hacking and hijacking behaviors, with both positive and negative effects. For example, in Skwarek’s piece with Joseph Hocking, the leak in your hometown, the corporate logo of BP becomes the trigger for an animated re-creation of the iconic broken pipe at the Macondo wellhead, spewing AR oil into your location. It is possible to see this as an inspired spatial hack and a biting social commentary, but I have no doubt BP executives would consider it a hijacking of their brand in the worst way.

In his book Smart Things, ubicomp experience designer Mike Kuniavsky asks us to think of digital media about physical entities as ‘information shadows’; I believe the work of these AR pioneers points us toward a future where digital information is not a subordinate ‘shadow’ of the physical, but rather a first-class element of our experience of the world. Even at this early stage in the development of the underlying technology, AR is a consequential medium of expression that is being used to tell meaningful stories, make critical statements, and explore the new dimensionality of a blended physical+digital world. Something important is happening here, and hacking space and time through AR is how we’re going to understand and make sense of it.

Welcome! This tutorial will show you how to create mobile AR using the Layar platform and Hoppala CMS service, with no programming required. I’ve kept it simple on purpose — both Layar and Hoppala have additional capabilities you should take the time to explore; for the technically inclined, the Layar developer wiki is a good place to start.

1. What you need to create your first mobile AR layer:

* A smartphone that supports the Layar AR browser. This means an iPhone 3GS or 4, or an equivalent Android device that has built-in GPS and compass. As of March 2011, Symbian S3 and S60 devices should also work, as should the Apple iPad2.

* The Layar app, downloaded onto your device from the appropriate app store.

* A computer with web access.

2. Get connected:

You’ll need to create a developer account with Layar and an account on the Hoppala Augmentation content management system (CMS). This should only take a few minutes:

* The Hoppala website: http://augmentation.hoppala.eu

* The Layar developer website: http://layar.com/publishing

Once you have your accounts, sign in to both sites and to the Layar app on your device.

3. Get started:

When you log into Hoppala, you should see the Dashboard, a simple list of your layers with Titles, Names and Overlay URLs.

At the bottom right of the page, click Add Overlay to create a new layer. A new entry will be added to the list, with Untitled, noname and a long, ugly URL. On the far right of that entry line, click the pencil icon to edit and give your layer a new title and name. The name needs to be all lowercase alphanumeric. Click the Save button.

Next, click on the name of your new layer. This will open a Google Map-based page. Use the map controls or enter your address to navigate to your current location and zoom in.

To add a point of interest (POI), click Add augment at bottom right of the page. This will add a basic POI called Untitled in the center of the map. You can drag it to the location you want.

To customize your new POI, click on the red map pin and a popup will open. The popup has 4 tabs, labeled General, Assets, Actions and Location. Each tab is a form we will use to enter data about the POI. For now, don’t worry about the Location tab.

GENERAL

* The title and description fields can be whatever text you want. The title is limited to 60 characters, and each description line can be 35 characters. Note that long text strings may not display fully on a small device screen. Try typing HELLO WORLD as your title.

* Thumbnail is the picture that is displayed in the POI’s information panel in the mobile app view. You can upload your own thumbnail from your computer by using Choose File and then Add.

* You can ignore the Footnote and Filter value fields for now.

* BE SURE TO CLICK THE SAVE BUTTON and wait for the confirmation.

ASSETS

* Icons are the small graphics that show up in the AR view for basic POIs. Choose default (you can create custom icons later if you like).

* Assets are 2D images or 3D objects that appear in the AR view. You can upload your own assets using Choose File and then Add. Images can be .jpg or .png; 3D objects must be in Layar’s .l3d format.

* Note that Hoppala supports some non-Layar AR browsers. You can ignore any sections for “junaio” and “Wikitude”.

* BE SURE TO CLICK THE SAVE BUTTON and wait for the confirmation.

ACTIONS

* In the Layar browser, you can have actions triggered from POIs. These can include going to a website, playing an audio or video, sending a tweet, an email or text, and making a phone call.

* Hoppala allows you to include up to 8 actions per POI.

* Actions can appear as buttons for the user to click, or they can be auto-triggered based on the user’s proximity to the POI location.

* Try adding a link to a website. For Label, type Google. Select ‘Website’ in the pulldown menu. Type http://google.com for the URL.

* BE SURE TO CLICK THE SAVE BUTTON and wait for the confirmation.

You can add more POIs, or move on to configuring and testing the layer.

4. Configure your layer:

Log into the Layar developer site. At the top right of the page, click My Layers and you will see a table of your existing layers, if any.

To add your new layer, click the Create a layer button. You will see a popup form.

* Layer name must be exactly the same as the name you chose in Hoppala.

* Title can be a friendly name of your choosing.

* Layer type should be whichever type you have made. If you used a 2D image or 3D model as an asset, select ‘3D and 2D objects in 3D space’.

* API endpoint URL is the URL for your layer, which you can copy from the Hoppala dashboard (the long ugly one).

* Short description is just some text.

Click Create layer and you should be done!

(There are lots more editing options, but you can safely ignore them for now).

5. Test your layer:

Start up the Layar app on your mobile device. Be sure you are logged in to your Layar developer account, or you will not see your unpublished test layer. Select LAYERS, and then TEST. You should see your test layer listed. Note: older versions of the Layar app may put the TEST listing in different places, so you may need to poke around a bit. Select your layer and LAUNCH it. Now look for your POIs and see if they came out looking the way you had expected.

Congratulations, you are now an AR author!

Excited (and a bit nervous ;-) about doing my first ever Ignite talk, next Monday 3/28 in SF. I’ve got 5 minutes to get through 20 auto-advancing slides on the subject of “Hijacking the Here and How: Adventures in Augmented Reality.” Here’s what I said in my submission:

Augmented reality is all about webcam marketing gimmicks and filling the world with geotagged logos, right? Nope, wrong. Instead, we’re learning that the natural mode of expression for AR, is enabling people to *hack time and space*. In 5 minutes, I’ll show you ~20 solid examples of how artists, journalists, historians and citizen activists are using augmented reality to hijack the here and now.

If you’re in San Francisco on Monday, come by and check it out — there are loads of fun speakers lined up, and it would be great to see some familiar faces! Details here: http://www.facebook.com/event.php?eid=192821184089845

Our Layar augmented reality Christmas card is live at http://m.layar.com/open/xmaslayar on your mobile, where you can throw snowballs at your favorite Layar team member and leave your wishes for us! Have a great holiday!

I have a bit of news: I’m joining Layar, the Dutch mobile AR company, as an Augmented Reality Strategist. \0/ In this new role I’ll be developing the creative ecosystem for the Layar platform, working with artists, developers, agencies, brands and media geeks to push the boundaries of AR experience design. As the first US-based team member, I’ll also be helping establish Layar’s Bay Area presence and growing the North American community of mobile AR enthusiasts. There’s more at the Layar blog.

I have a bit of news: I’m joining Layar, the Dutch mobile AR company, as an Augmented Reality Strategist. \0/ In this new role I’ll be developing the creative ecosystem for the Layar platform, working with artists, developers, agencies, brands and media geeks to push the boundaries of AR experience design. As the first US-based team member, I’ll also be helping establish Layar’s Bay Area presence and growing the North American community of mobile AR enthusiasts. There’s more at the Layar blog.

Signing on with the Layar team feels like a natural evolution to me. I’ve worked at the intersection of digital media and physical reality for more than a decade, and mobile AR is one of the most important world-changing developments in that field. I believe that today’s mobile AR is the leading edge of an emerging new medium of expression and communication, a vision the Layar team shares. The chance to help shape something this important, working with a team of this caliber…well let’s just say it’s going to be fun ;-)

As for Lightning Laboratories, I’ll still be blogging here and sending out the occasional Connected World newsletter. However, the consulting part of the business will be offline for the foreseeable future. My social media practice will move over to TrendJammer, the new social consulting & analytics venture that my friend Risto Haukioja has launched, and for which I’m an advisor. If you’re interested in that sort of thing, there’s lots of fun going on at trendjammer.com and @trendjamr on Twitter.

If you want to get in touch, the usual channels still apply: @genebecker, @ubistudio, gene at lightninglaboratories dot com, etc. For super-double-secret Layar business, I’m now gene at layar dot com. Now let’s get out there, people — we’ve got a new medium to build!

In collaboration with Adriano @Farano, I’ve been experimenting with creating historical experiences in augmented reality. Adriano’s on a Knight Fellowship at Stanford, and he’s seeking to push the boundaries of journalism using AR; my focus is developing new approaches to experience design for blended physical/digital storytelling, so our interests turn out to be nicely complementary. This is also perfectly aligned with the goals of @ubistudio, to explore ubiquitous media and the world-as-platform through hands-on learning and doing.

Adriano’s post about our first playtesting session, Rapid prototyping in Stanford’s Main Quad, included this image:

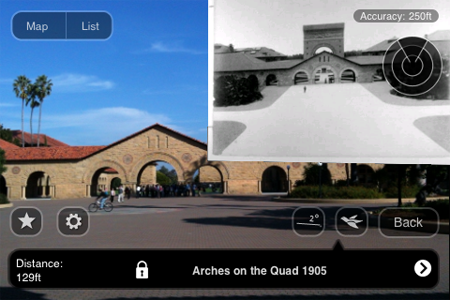

Taken from the interior of the Quad looking toward the Oval and Palm Drive, you can see that the photo aligns reasonably well with the real scene. Notably, the 1905 picture reveals a large arch in the background, which no longer stands today. We later found out this was Memorial Arch, which was severely damaged in the great 1906 earthquake and subsequently demolished.

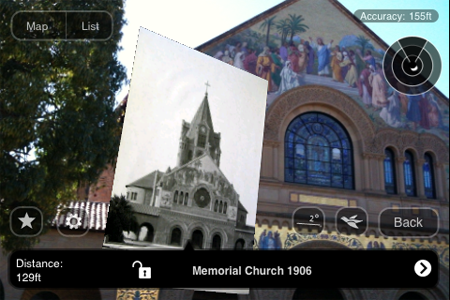

In our second playtesting session, we continued to experiment with historical images of the Quad using Layar, Hoppala and my iPhone 3Gs as our testbed. Photos were courtesy of the Stanford Archives. This view is from the front entrance to the Quad near the Oval, looking back toward the Quad. Here you can see the aforementioned Memorial Arch in 1906, now showing heavy damage from the earthquake. The short square structure on the right in the present day view is actually the right base of the arch, now capped with Stanford’s signature red tile roof.

In this screencap, Arches on the Quad 1905 is showing as the currently selected POI, even though the photo is part of a different POI.

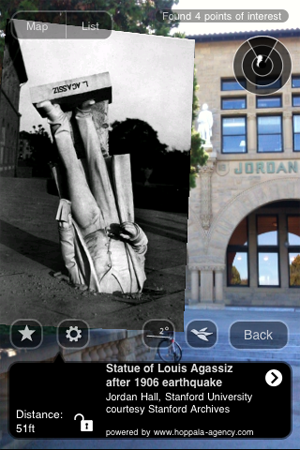

One of the more famous images from post-earthquake Stanford is this one, the statue of Louis Agassiz embedded in a walkway:

Although the image is scaled a bit too large to see the background well, you can make out that we are in front of Jordan Hall; the white statue mounted above the archway on the left is in fact the same one that is shown in the 1906 photo, nearly undamaged and restored to its original perch.

Finally we have this pairing of Memorial Church in 2010 with its 1906 image. In the photo, you can see the huge bell tower that once crowned Mem Chu; this was also later destroyed in the earthquake.

Each of these images conveys some idea of the potential we see in using AR to tell engaging stories about the world. The similarities and differences seen over the distance of a century are striking, and begin to approach what Reid et al defined as “magic moments” of connection between the virtual and the real [Magic moments in situated mediascapes, pdf]. However, there are many problematic aspects of today’s mobile AR experience that impose significant barriers to reaching those compelling moments. And so, the experiments continue…